Crawl depth is the number of clicks it takes for a search engine crawler to reach a page starting from the homepage, and it directly affects whether that page gets crawled and indexed. The deeper a page sits in a site’s structure, the less likely it is to be discovered and indexed consistently by search engines. This guide covers what crawl depth means in SEO, why it matters for indexation, what a good crawl depth looks like, and how to audit and improve it across your site.

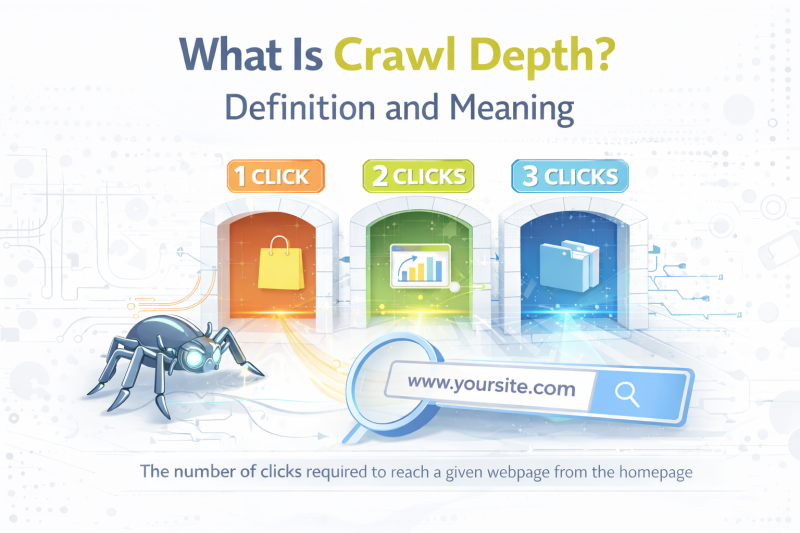

What Is Crawl Depth? Definition and Meaning

Crawl depth refers to how many clicks away a page is from the homepage. A page sitting one click from the homepage has a crawl depth of 1. A page reachable only after clicking through a category, a subcategory, and then a product listing has a crawl depth of 3.

The concept is straightforward, but its implications for SEO are significant. Search engine crawlers like Googlebot follow links to discover and revisit pages. The more links a crawler has to follow to reach a page, the more crawl resources are consumed, and the less likely that page is to be crawled frequently or at all.

Crawl depth vs crawl budget. These two terms are related but distinct. Crawl depth describes the structural position of a page within your site. Crawl budget refers to the number of pages Googlebot is willing to crawl on your site within a given timeframe. A site with poor crawl depth management wastes crawl budget on navigation and intermediate pages, leaving fewer resources for the content pages that actually need to be indexed.

A simple example. Consider a blog with the following structure:

- Homepage (depth 0)

- Blog index page (depth 1)

- Category page (depth 2)

- Individual blog post (depth 3)

This is a reasonable structure. Now add a second layer of subcategories between the category page and individual posts, and those posts move to depth 4 or 5. For a large site with thousands of posts, pages at depth 5 and beyond may rarely be crawled.

Why Crawl Depth Matters for SEO

Crawl depth is not just a technical concern. It has direct consequences for how well your content performs in search.

Pages at greater depth get crawled less frequently. Googlebot allocates crawl resources based on a combination of page authority, internal link signals, and site structure. Pages buried deep in a site’s hierarchy receive fewer crawl visits, which means updates to those pages take longer to be reflected in search results and new pages in deep positions may take weeks or months to be indexed.

Deep pages signal lower importance to crawlers. When a page requires many clicks to reach from the homepage, it implicitly signals to search engines that it is less important than pages closer to the surface. This affects not just crawl frequency but also how much authority flows to that page through internal links.

Crawl depth affects indexation directly. A page that is rarely crawled is a page at risk of not being indexed or being dropped from the index over time. For large sites with thousands of pages, poor crawl depth management is one of the most common reasons why significant portions of a content library never rank or generate traffic.

New content suffers the most. When you publish a new page deep in your site structure with few or no internal links pointing to it, Googlebot may not discover it for weeks. On a site with a shallow, well-linked structure, new content is typically indexed within days.

>>> EXPLORE FURTHER: Buy Backlinks, Buy Guest Posts & Backlink Services | SEONetwork

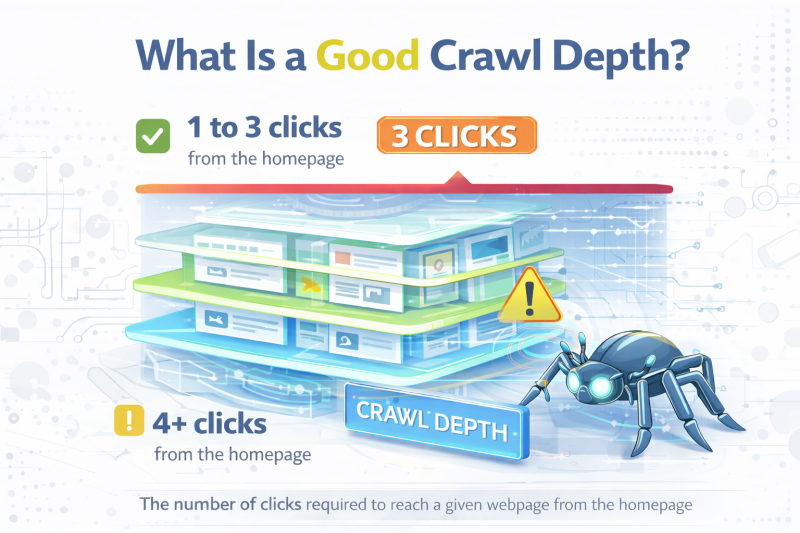

What Is a Good Crawl Depth?

The widely referenced benchmark in SEO is three clicks. Pages that can be reached within three clicks from the homepage are generally considered well-positioned for crawling and indexation. This does not mean every page on your site needs to be within three clicks, but it is a practical target for your most important content.

A general reference framework:

Depth 1 to 2: Homepage, main category pages, top-level landing pages. These pages receive the most crawl attention and carry the most internal authority. Reserve this depth for your highest-priority pages.

Depth 3: Most blog posts, product pages, and supporting content should ideally sit here. This is the practical limit for content you want indexed reliably and ranked competitively.

Depth 4 to 5: Acceptable for lower-priority content on large sites, but these pages require deliberate internal linking to ensure they are crawled consistently. Archive pages, older content, and paginated pages often fall here.

Depth 6 and beyond: Pages at this depth are at real risk of being ignored by crawlers, especially on sites with limited crawl budget. If important content sits here, it needs to be restructured or brought closer to the surface through internal linking.

The right crawl depth target also depends on site size. A 50-page site does not need to worry about this. A site with 50,000 pages needs to treat crawl depth as a core part of its technical SEO strategy.

How Internal Linking Improves Crawl Depth and Indexation

Internal linking is the most direct and practical way to improve crawl depth without restructuring your entire site. It works by creating shorter paths for crawlers to reach pages that would otherwise require many clicks to access.

Internal links create shortcuts for crawlers. When a page at depth 5 receives an internal link from a page at depth 2, the effective crawl depth of that deep page is reduced. Googlebot can now reach it in three clicks instead of five. This single change can meaningfully increase the frequency with which that page is crawled.

Link from high-authority pages to deep content. The most impactful internal links for crawl depth purposes are those coming from pages that are already crawled frequently, such as your homepage, top-level category pages, or high-traffic cornerstone pages. A link from one of these pages to a deep piece of content pulls it closer to the surface of your site’s crawl hierarchy.

Cornerstone pages and topic clusters reduce effective crawl depth. A well-structured topic cluster, where a cornerstone page links to all supporting articles and supporting articles link back to the cornerstone, naturally keeps content within a shallow crawl depth. Every supporting article is reachable in two to three clicks from the cornerstone, and if the cornerstone is linked from the homepage or a top-level navigation page, the entire cluster sits within an acceptable crawl depth range.

Internal linking directly supports indexation. Pages with no internal links pointing to them, known as orphan pages, are among the hardest for crawlers to find and the most likely to be missing from the index. Adding even a single contextual internal link from a relevant, well-crawled page is often enough to trigger indexation for a page that has been sitting unindexed for months.

Practical approach. When auditing crawl depth, prioritize adding internal links to pages that are both important and currently sitting at depth 4 or beyond. These are your highest-leverage opportunities. A single internal link from a shallow, authoritative page can reduce the effective crawl depth of a deep page immediately.

Other Factors That Affect Crawl Depth

Internal linking is the primary lever, but several other factors influence how deep pages sit in your crawl hierarchy.

Site structure and navigation. The number of category levels in your site architecture directly determines the minimum crawl depth of your deepest pages. A flat site structure, where most content is accessible within two to three clicks from the homepage, is inherently easier for crawlers to process than a deeply nested structure with multiple subcategory layers.

XML sitemap. A well-maintained XML sitemap helps Googlebot discover pages regardless of their crawl depth. Submitting your sitemap through Google Search Console ensures that even deep pages are known to Google, though it does not guarantee they will be crawled as frequently as shallower pages. A sitemap is a discovery tool, not a substitute for good internal linking.

Pagination. Paginated content, such as blog archives, product category listings, and search result pages, can push individual content pages deeper in the crawl hierarchy if not handled correctly. Using proper pagination markup and ensuring that paginated series are internally linked helps crawlers navigate them without consuming excessive crawl budget.

Faceted navigation. E-commerce sites with faceted navigation, where users can filter by attributes like color, size, or price, can generate enormous numbers of URLs. Without proper controls such as noindex tags, canonical tags, or parameter handling in Google Search Console, these URLs can consume crawl budget and push important product pages deeper in the effective crawl hierarchy.

JavaScript rendering. Pages that rely heavily on JavaScript for their navigation or internal links create an additional layer of crawl complexity. Googlebot can render JavaScript, but it does so in a separate, delayed queue. Links that only appear after JavaScript rendering are discovered more slowly, effectively increasing the crawl depth of pages that depend on them.

How to Audit and Improve Crawl Depth

Improving crawl depth starts with understanding your current structure and identifying where problems exist.

Step 1: Crawl your site with Screaming Frog. Screaming Frog’s SEO Spider tool shows the crawl depth of every page on your site. Run a full crawl and filter by crawl depth to identify pages sitting at depth 4 and beyond. Export this list and cross-reference it with your Google Search Console index coverage report to find pages that are both deep and missing from the index.

Step 2: Identify your most important deep pages. Not all deep pages are a problem. Focus your attention on pages that are strategically important but structurally buried. These are your high-value blog posts, key product pages, or important landing pages that are not receiving the crawl attention they deserve.

Step 3: Add internal links from shallow pages. For each important deep page you identify, find relevant pages at depth 1 to 3 that could naturally link to it. Add contextual internal links with descriptive anchor text. Even one or two well-placed links from shallow, frequently crawled pages can significantly improve the crawl situation for a deep page.

Step 4: Review and flatten your site architecture where possible. If your category structure has more than three levels, consider whether all levels are necessary. Consolidating subcategories or reducing the number of pagination layers can bring content closer to the surface structurally.

Step 5: Update your XML sitemap. Ensure your sitemap includes all important pages and is free of URLs that return errors or redirects. Submit the updated sitemap through Google Search Console and monitor the index coverage report for improvements over the following weeks.

Step 6: Monitor with Google Search Console. The Coverage report in Google Search Console shows which pages are indexed, which are excluded, and why. Cross-referencing this with your crawl depth data helps you track whether your improvements are having the desired effect on indexation.

Common Crawl Depth Mistakes to Avoid

Even sites with good technical foundations can create crawl depth problems through avoidable structural decisions.

Too many category levels. Adding subcategory layers to organize content makes intuitive sense from a UX perspective, but each additional layer pushes content pages deeper. Before adding a new category level, consider whether the organizational benefit justifies the crawl depth cost.

Orphan pages with no internal links. Pages published without any internal links pointing to them are effectively invisible to crawlers unless they are in your sitemap. This is one of the most common causes of indexation failures on content-heavy sites. Every page you publish should receive at least one contextual internal link from a relevant, already-indexed page.

Pagination handled incorrectly. Relying solely on paginated archives to make content discoverable means individual posts or products are only reachable after multiple pagination clicks. Supplement pagination with contextual internal links from relevant content pages to ensure important pages have a direct path to the surface.

JavaScript-dependent navigation. If your primary navigation or internal links are rendered via JavaScript, crawlers may not follow them reliably or promptly. Ensure that your most important internal links are available in the HTML source, not dependent on JavaScript execution.

Ignoring crawl depth on large content migrations. When migrating a site to a new CMS or restructuring your URL architecture, crawl depth should be part of the planning process. Migrations that add category layers or break existing internal link structures can significantly increase crawl depth for large portions of your content, leading to temporary or permanent indexation drops.

Final Thoughts

Crawl depth is one of those technical SEO factors that is easy to overlook until it becomes a real problem. For small sites, it rarely matters. For sites with hundreds or thousands of pages, it can be the difference between a content library that ranks and one that barely gets crawled.

The fix is rarely complicated. A flatter site structure, deliberate internal linking, and a well-maintained sitemap will resolve most crawl depth issues. The key is identifying which pages are buried and taking the steps to bring them closer to the surface before poor crawl depth compounds into a wider indexation problem.

If you are working on improving your site’s authority alongside its technical structure, SEONetwork provides a structured way to find and evaluate backlink placements that support your most important pages.

Frequently Asked Questions

What is crawl depth in SEO?

Crawl depth is the number of clicks it takes for a search engine crawler to reach a page starting from the homepage. A page reachable in one click has a crawl depth of 1. The deeper a page sits in the site structure, the less frequently it tends to be crawled and the harder it is to index reliably.

What is a good crawl depth?

The generally accepted benchmark is three clicks or fewer from the homepage. Pages within this range are typically crawled frequently and indexed reliably. Pages at depth 4 or beyond may still be indexed, but they require deliberate internal linking and sitemap inclusion to ensure consistent crawl coverage.

Does crawl depth affect SEO rankings?

Not directly. Crawl depth does not appear in Google’s ranking algorithm as a direct factor. However, it affects whether a page gets crawled and indexed at all, which is a prerequisite for ranking. A page that is rarely crawled is also unlikely to have its updates reflected quickly in search results, which can hurt its competitive performance over time.

How does internal linking affect crawl depth?

Internal links create shorter paths for crawlers to reach deep pages. When a page at depth 5 receives a link from a page at depth 2, crawlers can now reach it in three clicks instead of five. This effectively reduces the page’s crawl depth and increases how frequently it is visited by search engine crawlers.

What is the difference between crawl depth and crawl budget?

Crawl depth describes the structural position of a page within your site. Crawl budget refers to the number of pages Googlebot will crawl on your site within a given period. Poor crawl depth management wastes crawl budget on intermediate pages, leaving fewer resources for the content pages that matter most.

How do I check crawl depth?

Screaming Frog SEO Spider is the most practical tool for checking crawl depth across an entire site. Run a full crawl and filter results by crawl depth to identify pages sitting deeper than three clicks. Google Search Console’s Coverage report can help you cross-reference deep pages with indexation status to prioritize which issues to address first.

I’m Jackson Avery, and I have 5 years of experience in content SEO. At SEONetwork, I share practical SEO knowledge, insights, and content strategies to help readers better understand search intent, content optimization, and sustainable organic growth.